Why enterprise AI adoption depends on literacy, strong DevOps, guardrails, and a full value stream mindset

7 min read

My current view

AI is being discussed as if it were mostly a coding story. It is not. At the enterprise level, AI is a system story.

AI can help generate more code, but the more important question is how well the organization can absorb that acceleration across the full path from idea to outcome, not just in delivery, without increasing risk and fragility elsewhere.

That is why my view is simple: AI is a multiplier. In strong systems, it accelerates value, learning, and delivery. In weak systems, it accelerates defects, risk, and fragility.

Enterprise AI adoption has to start with literacy, strong DevOps, clear guardrails, a full value stream mindset, and human accountability.

I have been making that case for a while.

In late 2024 and throughout 2025, much of the AI conversation focused on adoption rates, usage metrics, and individual productivity gains. My focus was elsewhere. I was more interested in the delivery system itself, how work flows from idea to production, how teams maintain operational resilience, and whether organizations were building the discipline needed to absorb acceleration safely.

More leaders are recognizing that now. They are starting to see that AI adoption is a system conversation.

AI is reshaping how code is written and how technology organizations think about the full system that delivers software.

To truly leverage what AI can do, organizations have to get exceptionally good at DevOps so code can move from idea to production quickly, reliably, consistently, and securely. That is where the real work is.

My view is simple: AI reveals the truth about your delivery system.

If organizations apply AI only to code generation while ignoring the surrounding system, they may get local gains while causing broader dysfunction. Faster code does not automatically mean better delivery. In many cases, it just means friction arrives sooner.

AI adoption starts with literacy

The question for most companies is no longer whether AI matters. It does. The question is how to begin adopting it practically and responsibly.

That starts with exposure, hands-on use, and shared learning. Teams need time to understand the tools, compare options, and build judgment through real experience. AI judgment does not come from a memo. It comes from using the tools, seeing where they help, seeing where they fail, and learning together.

But fluency alone is not enough. Knowing the tools is not enough.

Without engineering depth, judgment, and systems thinking, AI adoption gets shallow fast. Organizations still need strong fundamentals to make AI durable at enterprise scale. That is especially true in enterprise environments where architecture, scale, resilience, security, and operational consequences are real.

Leadership still matters

Strong leadership matters even more in an AI-enabled environment.

The old model, which overvalued the technical leader who could personally grind out the most code, was already limited. In an AI-enabled environment, it becomes even less useful.

What matters more now is the ability to build strong teams, create repeatable systems, develop experienced people, and lead through change.

Experienced engineers become force multipliers when paired with AI. Their value is not just in what they personally type. Their value is in what they can shape, guide, review, and orchestrate across increasingly AI-enabled workflows.

And accountability does not move to the model. That line matters because it cuts through a lot of lazy thinking. Humans remain accountable for what AI helps create. Teams still own quality, security, operability, and outcomes. The model can assist. It can accelerate. It can suggest. But it does not own the result.

AI also does not remove the need for strong architects and engineers. At least today, enterprise AI still depends on experienced technical people who can think through complex problems, shape sound solutions, and orchestrate what AI helps build. That is especially true in environments that demand concurrency, scale, resilience, security, and high-volume performance.

The leaders who create the most value with AI will be the ones who understand people, systems, flow, and change.

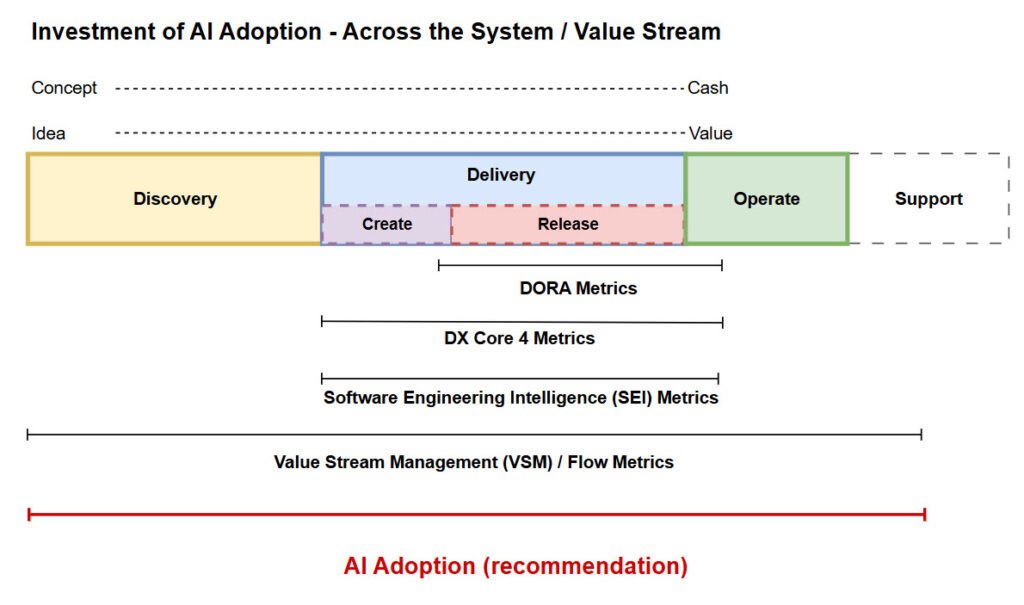

AI across the value stream

I say this not only as a technology leader, but as a technologist and active user of AI. I use it. I see how it can accelerate thinking, improve analysis, and increase the leverage of experienced professionals.

But I also see the temptation to confuse more activity with more value. More AI-generated work does not automatically improve outcomes.

Without strong systems and disciplined ways of working, AI can create the appearance of progress while increasing waste and risk beneath the surface.

That is why I keep coming back to the same point: AI is a value stream conversation.

Across the value stream means more than coding and delivery. It includes upstream work in product ideation, discovery, market fit, legal and compliance review, prioritization, and design, as well as downstream work in development, deployment, operations, support, and learning.

If enterprises want the full benefit, they have to think beyond build speed and look at the full path from idea to outcome. Requirements matter. Design matters. Testing matters. Deployment matters. Operability matters. Feedback loops matter.

Guardrails are the structure that enables speed. That is not bureaucracy. That is what lets teams move faster without creating avoidable risk.

AI also will not fix unclear priorities. If coding speeds up but lead time does not improve, the constraint lies elsewhere in the system: decision latency, dependencies, validation, operability, ownership, or handoffs.

AI, operations, and the human loop

A lot of today’s AI discussion centers on whether engineers will be replaced or whether teams will become much smaller.

Some of that may happen, especially if organizations optimize the system that moves software from idea to production. But software delivery does not end at production.

When the enterprise system fails, who gets the call? Who understands the architecture, the dependencies, the customer impact, and the safest path to recovery?

AI may increasingly help diagnose incidents and accelerate response. That is useful. But at an enterprise level, operating and maintaining production systems still requires human judgment, accountability, and deep contextual knowledge.

Speed without operational resilience is just risk in a different form.

If AI helps teams build faster but weakens operational depth, resilience, or human ownership, then the organization has not matured. It has just shifted risk.

Software does not stop at deployment. Enterprise responsibility includes operating, maintaining, restoring, and improving systems in production.

There is also a difference between efficiency and fragility. If AI-driven efficiency reduces teams to the point where one or two people become the operational lifeline for production, the organization has not eliminated risk. It has concentrated it.

A smaller team is not automatically a stronger or more cost-effective system. If production resilience depends on a handful of people, the enterprise may be running lean but also fragile.

How I approach AI adoption

My approach is practical.

Start by defining risk boundaries. Know which data can be used, which cannot leave the environment, and which require review.

Choose tools with intent. Different use cases require different tools, trust levels, and constraints.

Build literacy through real use. Hands-on learning, shared practices, and room for experimentation matter.

Integrate AI into the delivery system itself. Managed context, better requirements, stronger tests, safer reviews, and faster debugging are where practical value begins to show up.

Add evaluation workflows. AI-assisted acceleration still needs checks for correctness, security, regressions, and operational impact.

And measure the system.

That last point matters more than many teams realize. System-level outcomes matter more than individual output metrics. It is easy to get distracted by visible activity. What matters is whether the system moves faster end-to-end with acceptable quality, reliability, and learning.

Measure the system, not the individual.

The real question

I am optimistic about AI. I have been using it since February 2023, and I have seen especially significant gains in the more recent wave of frontier models and agents. I can already see how it is reshaping how modern organizations work.

But I am also practical. AI will not rescue a broken operating model. It will reveal it.

The organizations that benefit most will be those with the strongest systems, clearest priorities, healthiest teams, and the most disciplined path from idea to outcome.

So the real question is not whether AI can generate more output.

The real question is this:

Is your system strong enough for AI to make you better, or will it simply make your existing problems move faster?

If you are working through AI adoption at the enterprise level, especially in the real-world context of delivery systems, trade-offs, risk, and operational responsibility, I am always interested in comparing notes.

This is one of the most important leadership conversations happening right now, and too often it is still being framed too narrowly.

Poking Holes

I invite your perspective on my posts. What are your thoughts?.

Let’s talk: phil.clark@rethinkyourunderstanding.com