6 min read

What are we trying to improve? The adoption of practices to find security vulnerabilities early in the development lifecycle.

What outcome do we hope to achieve? Additional security coverage, where applicable, earlier in the software development lifecycle.

Let’s Shift Left

Are you familiar with the term “shift left”? It is a popular concept in the tech industry for good reasons. I define shift left as enabling the earliest feedback. It’s about determining if your code modification is functioning as intended and detecting any potential damage to pre-existing code as soon as possible.

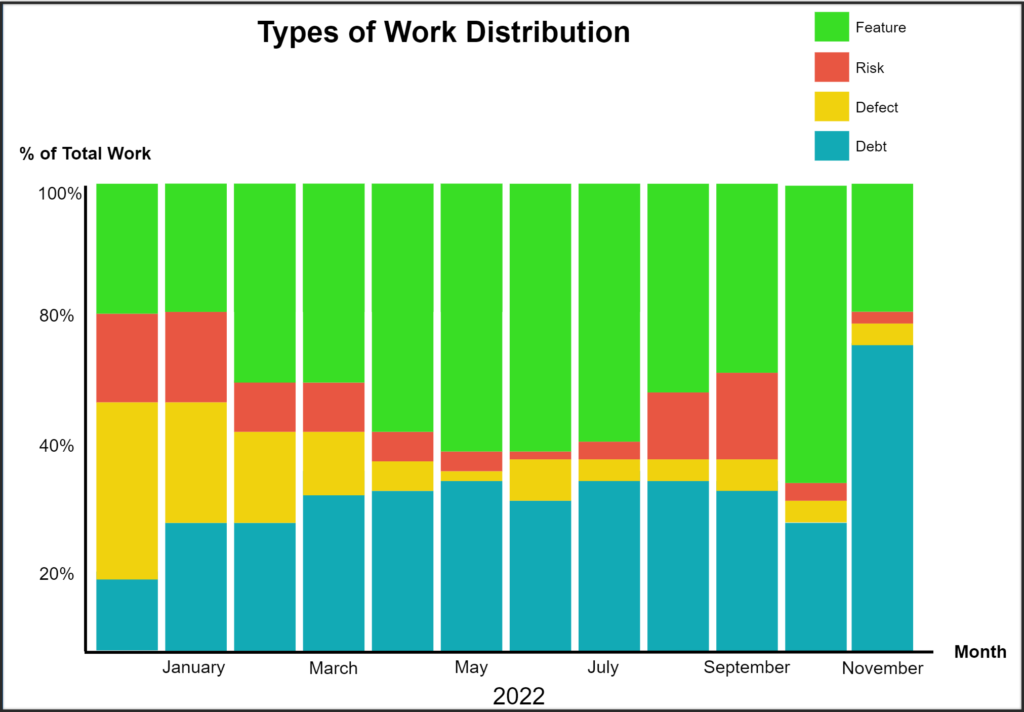

Why should we postpone identifying an issue until the last minute? The cost of detecting an issue increases as it is detected later in the delivery flow (cost is a whole other conversation). For us, we started shifting left for quality, running tests at all levels, and moving from depending on extensive, long-running tests in staging or production environments to reducing the number of these tests and replacing them with tests earlier in the flow (shifting the tests left). Several libraries support unit test implementation in most languages. Our journey started several years back with Martin Fowler’s article on the practical test pyramid1 and adopting the practice of test-driven development (TDD).

Security Shift Left Mindset

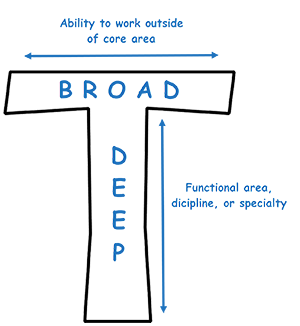

Security has become indispensable to our work in the evolving technology and software development landscape. It’s no longer just about developing features but ensuring they are secure and reliable for our users. Significant improvements have been made at the platform and systems levels. Here’s the kicker: what if we did the same “shift-left” approach for security? Developers can access helpful tools such as profilers, static analysis, and dynamic analysis scanners. The objective is to identify security issues quickly alongside quality and defects. Why not make security-based unit tests a core practice of your team?

OWASP Top 10: Key Practices for Secure Development in the coding stage

We want to ensure our code is secure and get feedback early. One way to do this is to follow the OWASP Top Ten2 security risks while writing and compiling our code. We can use unit tests to help prevent these risks from happening.

1. Injection (OWASP A1): Create input validation tests to mitigate injection flaws.

// Java

@Test

public void testSqlInjectionVulnerability() {

String maliciousInput = "1'; DROP TABLE users; --";

assertFalse(isSqlInjectionSafe(maliciousInput));

}2. Broken Authentication (OWASP A2): Develop tests to verify session management and authentication.

// Java

@Test

public void testSessionExpiration() {

User testUser = new User("Test User");

Session testSession = new Session(testUser);

Thread.sleep(MAX_SESSION_TIME + 1);

assertFalse(testSession.isValid());

} 3. Sensitive Data Exposure (OWASP A3): Formulate tests to prevent inadvertent data leaks.

// Java

@Test

public void testDataLeak() {

User testUser = new User("Test User", "password");

Logger testLogger = new Logger();

testLogger.log(testUser);

assertFalse(testLogger.containsSensitiveData());

} 4. XML External Entity (XXE) (OWASP A4): Test XML parsers for correct configuration.

// Java

@Test

public void testXXE() {

String maliciousXML = "..."; // some malicious XML

assertThrows(XXEException.class, () -> parseXML(maliciousXML));

} 5. Broken Access Control (OWASP A5): Assert appropriate access levels for different user roles.

// Java

@Test

public void testAdminOnlyAccess() {

User testUser = new User("Test User", Role.USER);

Resource restrictedResource = new Resource("Restricted Resource");

assertThrows(AccessDeniedException.class, () -> restrictedResource.access(testUser));

} 6. Cross-Site Scripting (XSS) (OWASP A7): Implement tests to check how the application handles untrusted data.

// Java

@Test

public void testXSSVulnerability() {

String maliciousInput = "<script>alert('XSS');</script>";

assertFalse(isXssSafe(maliciousInput));

} Other examples for the user interface (JavaScript)

1. Cross-Site Scripting (XSS) Protection: To prevent XSS attacks, you should test that your rendering function properly escapes user input.

describe('XSS Protection', () => {

it('should escape potential script tags in user input', () => {

const userInput = '<script>alert("xss")</script>';

const escapedInput = escapeUserInput(userInput);

expect(escapedInput).toEqual('<script>alert("xss")</script>');

});

});2. Injection and Input Validation: Confirm your software correctly validates the user input and prevents SQL injection.

describe('Input Validation', () => {

it('should invalidate input containing SQL Injection attempt', () => {

const userInput = "'; DROP TABLE users; --";

expect(isInputValid(userInput)).toBe(false);

});

}); 3. Authorization/Access Control: Ensure certain UI elements are accessible only to authenticated or authorized users.

describe('Authorization', () => {

it('should not show the admin button for non-admin users', () => {

const user = { isAdmin: false };

render(<Dashboard user={user} />);

expect(screen.queryByText('Admin Panel')).not.toBeInTheDocument();

});

}); 4. Token Handling: Verify that authentication tokens are stored and handled securely.

describe('Token Handling', () => {

it('should not store tokens in localStorage', () => {

setAuthToken('exampleToken');

expect(window.localStorage.getItem('authToken')).toBeNull();

});

});Check out this YouTube video: DevSecOps wins with Security Unit Tests.3

What about AI?

As we focus on modern security practices, it’s worth touching on these efforts’ using artificial intelligence (AI) advantages.

While you can find posts and articles from earlier this year (2023) concerning the security vulnerabilities that code assistance tools like GitHub copilot can create. However, you can also find posts and articles detailing how quickly the security features of these tools are improving.4

There is no perfect solution. Still, tools like copilot can learn from past incidents, analyze patterns, and predict vulnerabilities. They can generate test cases based on software behavior and suggest edge cases to enhance our unit testing process. These tools can enhance security unit tests. Machine learning models can be trained on numerous secure and insecure code examples, predicting whether a new piece of code might contain security vulnerabilities based on patterns they’ve learned. These tools can flag potential security issues as developers write code, providing immediate feedback and opportunities for learning.

AI can assist in static and dynamic security testing. One example is that AI can help with the time-consuming task of sorting through false positives in static code analysis results. Additionally, AI can identify patterns in code that humans may overlook and point out areas that require further examination. In dynamic analysis, AI can help mimic the actions of real users by interacting with the software as humans would, finding vulnerabilities that manual testing might not uncover. The continuous learning process of AI models also ensures that our testing procedures will evolve alongside new threat patterns.

Wrapping this up

While the OWASP Top Ten may not encapsulate all possible security issues, it provides a robust foundation for our security practices. Incorporating these tests into our daily workflow is a strategic move that will substantially enhance our products’ security. Such tests should be designed to verify that the user interface effectively implements various security measures. For teams yet to adopt this “shift left” practice, it is imperative to start integrating security testing earlier in the development process and uphold high-security standards.

To stay ahead of evolving threats and reinforce our software’s security, we should also consider the integration of AI into our security strategy. AI can enable us to identify and tackle potential vulnerabilities proactively. However, it is essential to remember that these powerful tools are intended to supplement, not substitute, our existing security practices and intuition.

Let’s focus on enhancing our security practices as we move forward. We should adopt emerging technologies like AI while keeping our main goal in mind – creating secure and reliable software for our users. By implementing strategies such as “shift left” security and utilizing the tools available to test the security of our code, we can stay ahead of evolving security threats and maintain the trust of our users.

Poking Holes

I invite your perspective to analyze this post further – whether by invalidating specific points or affirming others. What are your thoughts?.

Let’s talk: phil.clark@rethinkyourunderstanding.

References

- Martin Fowler (26 February 2018), The Practical Test Pyramid, martinFowler.com, https://martinfowler.com/articles/practical-test-pyramid.html

- Aimee, Nikki, and featuring Abhay Bhargav (26 September 2021), DevSecOps wins with Security Unit Tests, youtube.com, https://www.youtube.com/watch?v=i34Ihbuslgw

- OWASP Top Ten, owasp.org, https://owasp.org/www-project-top-ten/

- Shuyin Zhao (14 February 2023), better AI model and new capabilities, github.blog, https://github.blog/2023-02-14-github-copilot-now-has-a-better-ai-model-and-new-capabilities/